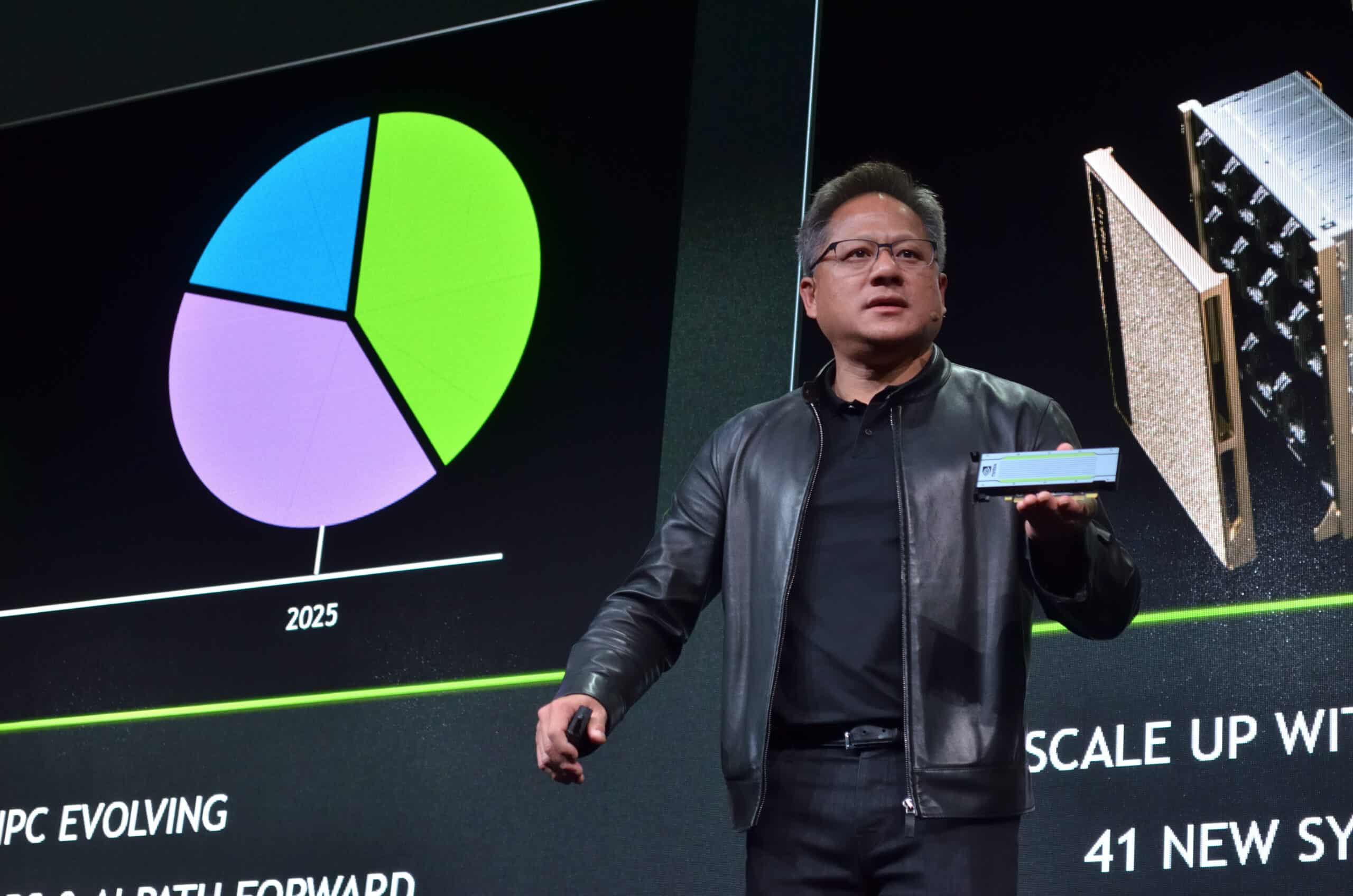

Nvidia’s (NASDAQ:NVDA | NVDA Price Prediction) annual GTC conference this week in San Jose delivered more than the usual GPU fireworks. CEO Jensen Huang stepped onstage and delivered on his promise of “a chip that will surprise the world,” unveiling the Vera Rubin platform built for the agentic AI era.

While I had thought a revolutionary AI CPU would be the centerpiece — especially after Nvidia locked in a landmark multi-year deal with Meta Platforms (NASDAQ:META) to deploy Grace and Vera servers starting in 2027 — the spotlight landed squarely on inference infrastructure.

Huang declared the arrival of the inference inflection point and sketched a path to $1 trillion in cumulative revenue from Blackwell and Vera Rubin systems through 2027. Yet the stock barely budged. Investors appear to be asking: Is this truly Nvidia’s next growth catalyst, or just another impressive but already-priced-in milestone?

A Full-Stack Bet on Agentic Workloads

At the heart of the reveal is the Vera Rubin architecture, pairing next-generation Rubin GPUs with a purpose-built 88-core Vera CPU rack. Unlike traditional GPUs optimized for massive parallel training, the Vera CPU targets the sequential, low-latency orchestration required by autonomous AI agents — tasks like reasoning chains, real-time decision-making, and data preprocessing that hyperscalers now demand at scale.

Meta’s commitment to deploy Vera CPU-only servers in 2027 alongside Blackwell and Rubin GPUs signals a broader industry shift. Other partners, including Alibaba (NYSE:BABA), ByteDance, and Oracle (NYSE:ORCL), are lining up for similar full-stack deployments. The platform also integrates Groq 3 LPX inference accelerators and BlueField-4 networking, creating what Huang called “AI factories” capable of handling pre-training, post-training, and test-time scaling in one cohesive system. Liquid-cooled racks supporting 256 units deliver twice the efficiency of legacy CPU designs, positioning Nvidia to capture not just GPU sales but an entire data-center stack.

Doubling the Roadmap

Huang dramatically raised Nvidia’s guidance, projecting at least $1 trillion in orders for the combined Blackwell and Vera Rubin generations — double the $500 billion target shared at last year’s GTC. The upgrade rests on the “inference supercycle” now underway. Agentic AI workloads are exploding beyond simple chatbots into enterprise automation, robotics, and consumer applications that require constant reasoning and adaptation.

This creates a fresh revenue runway beyond GPUs. Analysts have long talked about a potential “CPU supercycle” as cloud giants balance their fleets for complex agentic systems. By extending its CUDA ecosystem to CPUs and inference silicon, Nvidia aims to diversify away from pure GPU cyclicality while locking in higher-margin, full-stack contracts. The Meta partnership alone could accelerate adoption across hyperscalers seeking the same balanced infrastructure Google and Amazon (NASDAQ:AMZN) are quietly evaluating.

Market Skepticism Remains Despite the Scale

Despite the headline-grabbing numbers, Wall Street greeted the news with a shrug. Nvidia shares have traded in a narrow range for more than six months, reflecting AI hype fatigue and sky-high expectations. UBS analyst Timothy Arcuri had warned ahead of the event that it would be “hard to see Nvidia being able to provide thesis-altering commentary that creates a breakout for the stock.” His caution proved prescient.

Investors appear to have already baked in explosive growth. The $1 trillion figure, while massive, lacked a single jaw-dropping surprise — such as radically new pricing power or immediate 2026 revenue acceleration — to overcome valuation concerns. Competitive pressure from custom silicon at the big cloud players and Advanced Micro Devices‘ (NASDAQ:AMD) continued CPU push also tempered enthusiasm. In short, the bar for a true breakout has risen so high that even a credible agentic AI roadmap failed to clear it on day one.

Key Takeaway

Nvidia entered GTC carrying heavy expectations after months of sideways trading. The leap to a $1 trillion inference opportunity marks a genuine strategic pivot from GPU monopoly to AI platform leader, potentially igniting the long-awaited CPU supercycle and cementing dominance in the agentic era. Meta’s 2027 Vera deployment and the full-stack efficiency gains underscore that this is no incremental update — it is Nvidia’s clearest bet yet on the next phase of AI infrastructure.

Whether this becomes the inflection point investors have been waiting for depends on execution and hyperscaler spending in 2026 and 2027. For now, the market’s muted reaction reflects realism more than doubt: Nvidia remains the undisputed frontrunner in AI silicon and software. The $1 trillion vision is real; the question is simply how quickly Wall Street will price it in.