Happy Friday, all. Here’s some only-in-2026 math for you.

“If you buy too much compute, you go out of business. If you buy too little compute, you can’t serve your customers.” That’s Krishna Rao, Anthropic’s CFO, on the Invest Like the Best podcast laying out the existential math of running a frontier AI lab. Under-buy and “you’re not at the frontier. Same thing.”

Rao says he spends 30 to 40% of his time on compute decisions alone. He calls compute “the lifeblood of our business” and “the canvas on which everything else gets built.” I’ve been following the AI infrastructure trade for three years now, and this is the cleanest articulation I’ve heard of why three public companies are simultaneously suppliers and shareholders of the same private AI lab.

The three-chip stool

Anthropic spreads its bet across Nvidia GPUs, Amazon’s Trainium, and Google’s TPUs. Fungibility is the point. Get locked into one vendor and you inherit their roadmap risk.

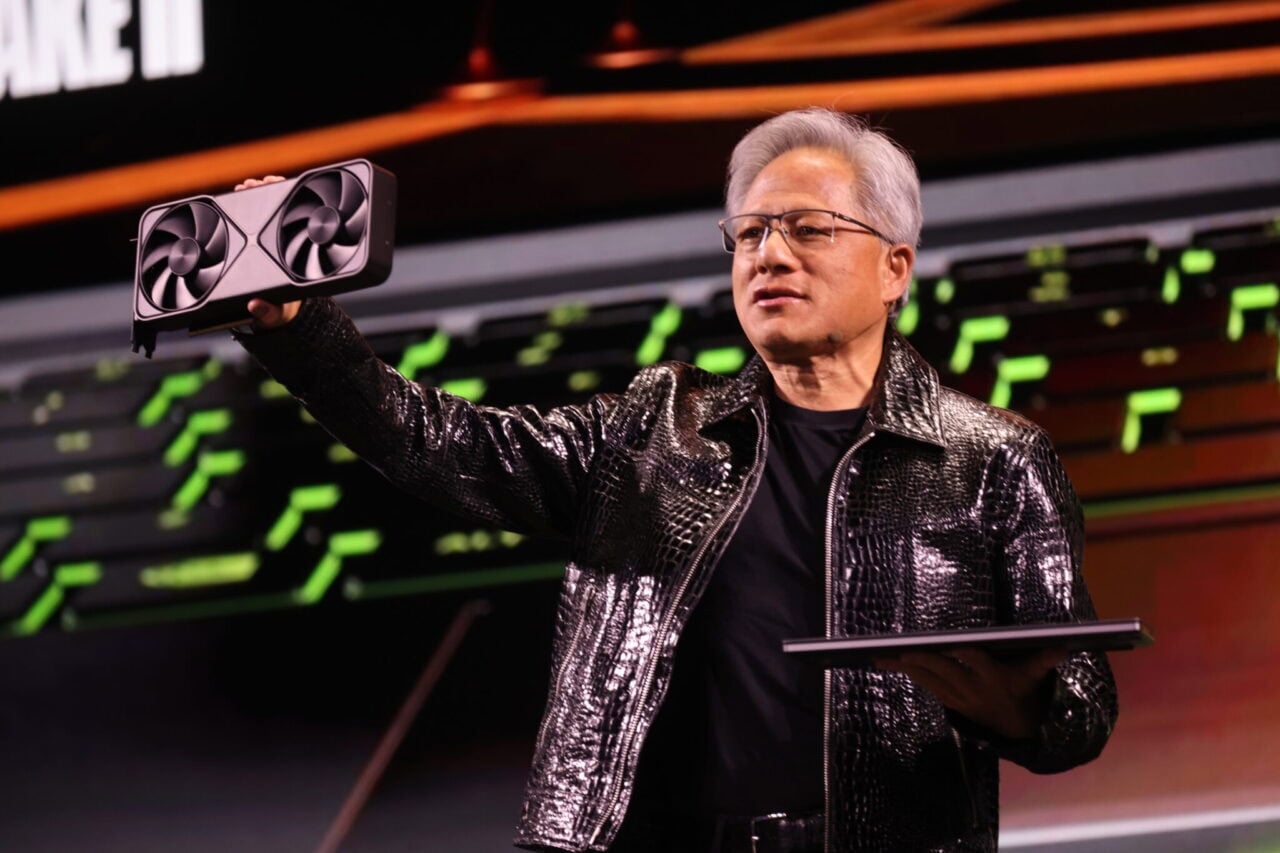

Nvidia: the incumbent

NVIDIA (NASDAQ:NVDA | NVDA Price Prediction) disclosed that “Anthropic will run and scale on NVIDIA infrastructure, initially adopting 1 gigawatt of compute capacity with Grace Blackwell and Vera Rubin systems.” Q4 FY2026 data center revenue hit $62.31B, up 75% year over year, and the stock is up roughly 20% in the past month. Total supply commitments sit at $95.2B.

Amazon: the deepest collaboration

Amazon (NASDAQ:AMZN) hosts Project Rainier, with over 500,000 Trainium2 chips training Claude. CEO Andy Jassy said on the Q1 call that AWS has “over $225 billion in revenue commitments for Trainium” and a $364 billion backlog that excludes a recent Anthropic deal worth over $100 billion. Rao said Anthropic works “from the chip level up” with Amazon’s Annapurna Labs because Anthropic’s workloads “stress the limits of what these chips are capable of.”

Google: the third leg

Alphabet (NASDAQ:GOOGL) supplies TPUs and is also an Anthropic backer. Google Cloud revenue grew 63% to $20.03B in Q1 2026, with backlog nearly doubling to over $460 billion. 2026 capex guidance: $175 to $185 billion.

The cone of uncertainty

Rao said that “really small movements in monthly or weekly growth rates result in compounding very, very different outcomes,” and that one to two years out “the range of outcomes starts to be really, really wide.” Linear planning fails in exponential markets.

Rao calls Anthropic “the most efficient users of compute amongst any of the frontier labs.” If you own NVDA, AMZN, or GOOGL on the thesis that AI infrastructure spending compounds, Rao’s framework is the bull case in his own words. The risk is the same sentence flipped: buy too much capacity, go out of business. Three vendors, one customer, one existential decision compounding into 2028.