Artificial intelligence has a funny way of turning obscure hardware components into economic kingmakers. A year ago, most investors barely thought about DRAM memory prices. Today, they may matter as much to AI growth as Nvidia (NASDAQ:NVDA | NVDA Price Prediction) GPUs. Why? Because AI models do not just need processing power — they need massive amounts of memory to move, store, and retrieve data in real time.

That is where the latest numbers out of South Korea should grab investors’ attention. According to export pricing data, DRAM export prices from Korea have surged 497% over the past year. Flash memory and high-bandwidth memory (HBM) prices have doubled or tripled, but DRAM stands in a class by itself. The question now is, does this choke off AI growth, or does it simply make the memory makers richer?

The AI Memory Crunch Is Getting Real

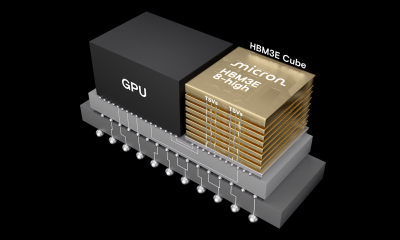

South Korea matters because it sits at the center of the global memory market. Samsung Electronics and SK Hynix together dominate DRAM and HBM production, while Micron Technology (NYSE:MU) controls much of the remaining supply. In fact, Samsung, SK Hynix, and Micron collectively provide roughly 95% of the world’s DRAM supply.

That concentration matters because DRAM is becoming AI infrastructure’s hidden tollbooth.

AI servers use dramatically more memory than traditional cloud servers. Training large language models requires constant high-speed data movement between GPUs and memory chips. Essentially, AI systems cannot think quickly if they cannot access information quickly.

Memory suppliers are struggling to keep up with demand from hyperscalers building AI data centers. The Big Four are collectively spending as much as $725 billion on AI infrastructure. GPUs grab the headlines, but memory has quietly become the supply chain constraint.

Here is what recent pricing trends look like:

| Memory Type | Unit Price | YoY Price Increase |

| DRAM | $89,498/kg | 497.4% |

| NAND Flash | $67,307/kg | 351.6% |

| HBM | $78,752/kg | 165.5% |

Source: Korean Customs Service.

Surprisingly, this is not just an AI data center issue anymore. Consumer electronics makers, PC manufacturers, and smartphone producers are also getting squeezed as memory contract prices rise across the board.

Why Micron May Be the Biggest Surprise Winner

Most investors immediately think of Nvidia when discussing AI infrastructure. Yet memory makers may end up being the quieter long-term winners because they control a resource AI cannot operate without. Micron Technology offers a good example.

Micron’s most recent earnings release showed revenue tripling year over year to $23.9 billion for its fiscal second quarter. Adjusted earnings climbed eightfold to $12.20 per share from $1.56 per share last year. Data center revenue quadrupled year over year, driven largely by HBM demand tied to AI servers.

Investors have noticed. Micron stock has risen more than 700% over the past year as memory pricing tightened and AI demand accelerated.

Here is how the three DRAM giants stack up:

| Company | Estimated DRAM Market Share | Key AI Advantage |

| Samsung Electronics | ~40% | Scale and vertical integration |

| SK Hynix | ~35% | HBM leadership for AI GPUs |

| Micron Technology | ~20% | Fastest earnings growth tied to AI demand |

Granted, memory markets have always been cyclical. Prices spike when supply tightens, then fall sharply once new production comes online. Smart investors should remember that history before assuming today’s profits last forever.

That said, AI may be changing the cycle’s duration. Unlike smartphones or PCs, AI infrastructure spending is still in the early innings. Hyperscalers are racing to build capacity before competitors gain an edge.

Will Higher Memory Prices Slow AI Growth?

Probably not — at least not yet. The bigger risk is margin pressure, not demand destruction. A single AI server loaded with Nvidia GPUs can cost more than $300,000. If memory costs rise another 50%, the overall economics still work for companies monetizing AI services across billions of users. In other words, memory inflation raises the price of entry, but it does not necessarily stop construction.

To put that into perspective, if a hyperscaler spends $10 billion building AI infrastructure, paying a few billion more for memory may hurt profits temporarily, but it is unlikely to halt expansion plans altogether.

Regardless of how you look at it, the pricing surge strengthens the hand of memory producers. The world’s AI ambitions currently rest on a remarkably narrow supply chain controlled by just three companies. That creates both opportunity and risk.

Key Takeaway

In short, DRAM has quietly become one of artificial intelligence’s most valuable bottlenecks. Korean export data showing a 497% jump in DRAM pricing highlights how strained the AI supply chain has become.

For investors, the winners are becoming clearer. Samsung, SK Hynix, and Micron sit in an enviable position because they control 95% of global DRAM production. When all is said and done, AI cannot scale without memory — regardless of how many GPUs companies buy.

Granted, memory booms eventually cool off. They always have. But today’s AI spending wave looks far larger and longer-lasting than prior tech cycles. That means the real story may not be whether memory prices rise. It may be how long these companies can keep charging more before supply finally catches up.